Artificial Intelligence (AI) forms a great part of our society today. We saw how AI has significantly changed the way things are designed and operated, much to the benefit of the human world. Unknown to many, most AI applications today run on some form of technology from NVIDIA.

NVIDIA plays a crucial role in the enablement of AI applications and solutions. Their focus in the research and development of AI technologies accelerates the creation of AI solutions for today and tomorrow. By constantly expanding their width, breadth and depth in end-to-end AI technologies, NVIDIA has already become one of the largest AI enabling company in the modern world.

At COMPUTEX 2022, NVIDIA has announced a few new technologies and strategies that will prepare the industry for a society that’s even more reliant on AI. The focus was on the creation of technologies for “AI Factories”, which will be the characteristics of most data centers in the near future.

While many of the information shared were already made at GTC 2022 two months back, here are five of the announcements made that were new to us.

-

NVIDIA Grace Server Reference Designs for OEMs

Collaborating with the world’s best system makers, NVIDIA have created a broad array of data center solutions. By working with other server manufacturers, NVIDIA can also expand their reach into various markets with their partner’s products that also uses NVIDIA technologies. Together, they offer hundreds of configurations of x86 and Arm systems to power the world’s need for HPC and AI.

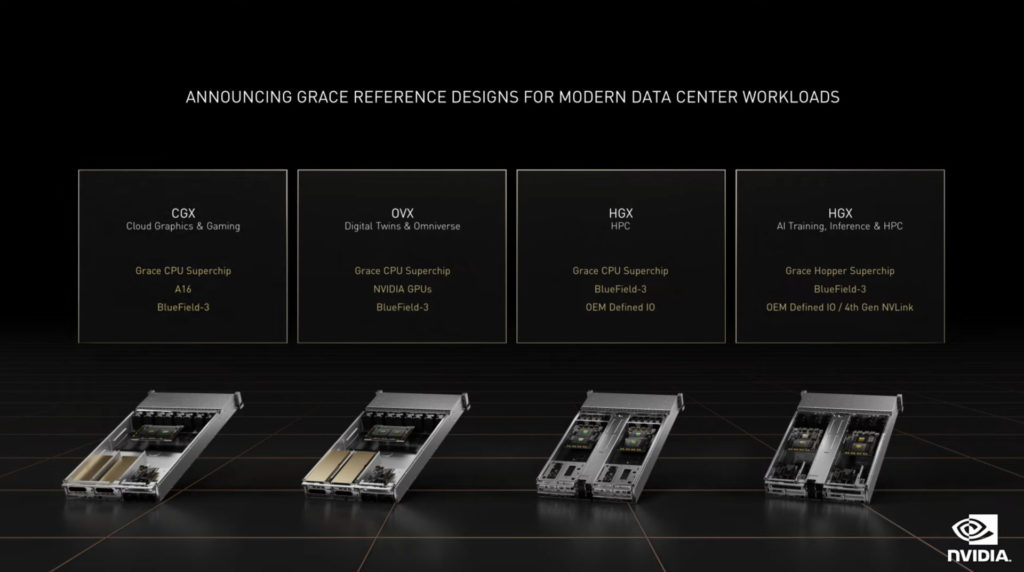

The announcement at COMPUTEX 2022 is the introduction of Grace-based reference server designs for system makers and server suppliers. There are 4 new reference designs that will uniquely cater for different workloads of the data centers of tomorrow. They are the CGX, OVX, HGX for Supercomputing and HGX for AI.

NVIDIA also announced that the HGX Grace and HGX Grace Hopper systems. NVIDIA will provide the Grace Hopper and Grace CPU Superchip modules, as well as their corresponding reference PCB design. With such designs, OEMs can then modify the design to customize to what is deemed fit by their customers. These two systems are designed based on a 2U high-density server chassis in mind.ASUS, Foxconn, GIGABYTE, Quanta Cloud Technology, SuperMicro and Wiwynn are part of the first waves of system providers. The Grace systems will start shipping in the first half of 2023.

-

NVIDIA Liquid-Cooled A100 GPU

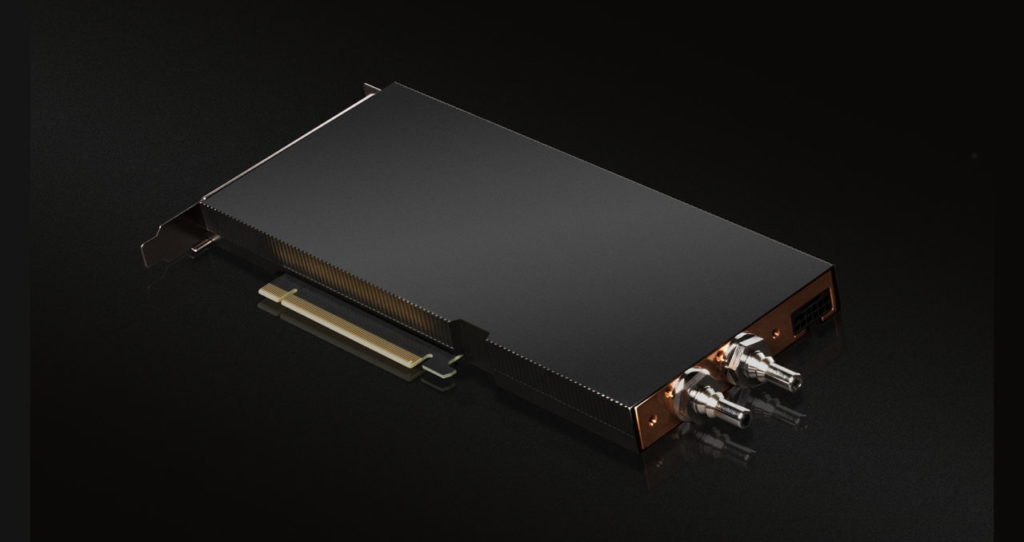

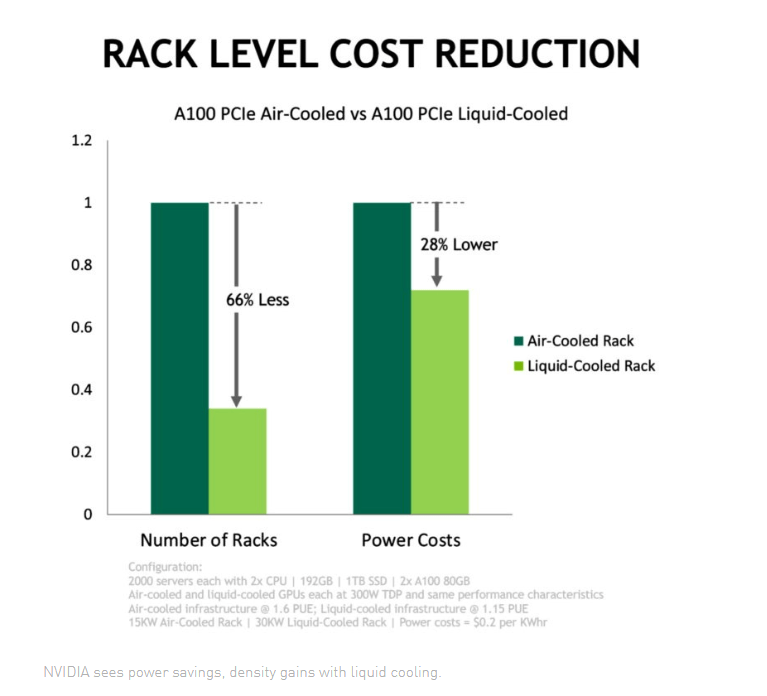

In response to data center’s growing demands in high-performance, green data centers, NVIDIA has also announced their Liquid-Cooled A100 PCIe GPU for their customers. Liquid cooling their GPUs can provide the same performance levels at less power.

At the same time, as the air-cooled A100 GPUs uses 2 expansion slots, as opposed to just one on the liquid-cooled A100, servers can essentially house twice the number of A100 GPUs in the same physical space. This could potentially increase the GPU compute density of each server if the number of GPUs are doubled.

At least a dozen system makers plan to incorporate these liquid-cooled GPUs into their offerings later this year. They include ASUS, ASRock Rack, Foxconn Industrial Internet, GIGABYTE, H3C, Inspur, Inventec, Nettrix, QCT, Supermicro, Wiwynn and xFusion

-

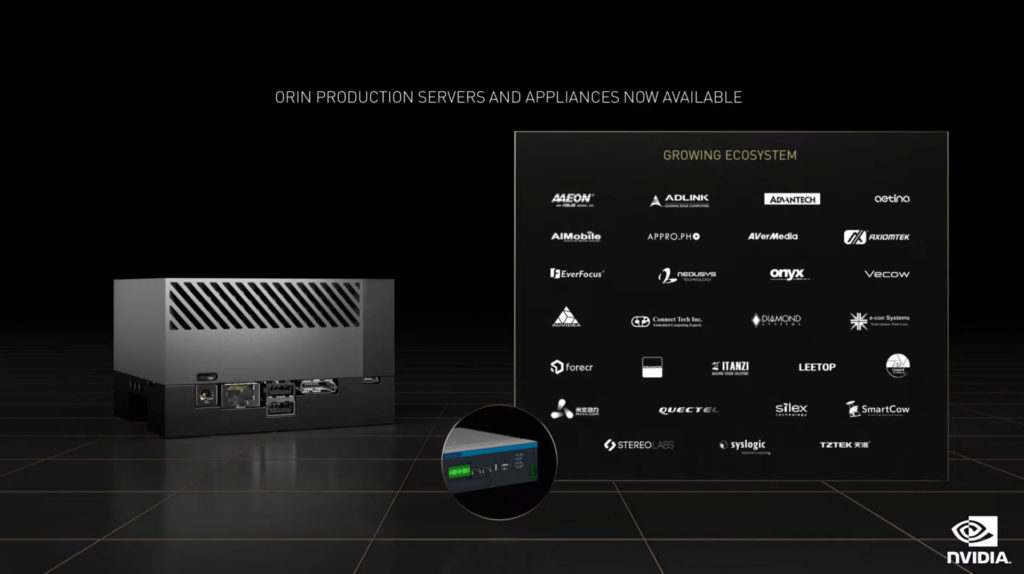

Jetson AGX Orin Production Systems from NVIDIA Partners

Announced at the most recent GTC 2022, the Jetson AGX Orin is a powerful small form factor energy efficient AI supercomputer for advanced robotics, autonomous machines and next-generation embedded and edge computing. Jetson AGX Orin delivers 275 trillion operations per second, giving customers over 8x the processing power of its predecessor, Jetson AGX Xavier, while maintaining the same palm-sized form factor and pin compatibility — all at a similar price. It features an NVIDIA Ampere architecture GPU, Arm Cortex-A78AE CPUs, next-generation deep learning and vision accelerators, high-speed interfaces, faster memory bandwidth and multimodal sensor support to feed multiple, concurrent AI application pipelines.At COMPUTEX 2022, NVIDIA has announced that the Jetson AGX Orin is now available as production units through technology partners. Among the releases are Taiwan-based members of the NVIDIA Partner Network, including AAEON, Adlink, Advantech, Aetina, AIMobile, Appropho, AverMedia, Axiomtek, EverFocus, Neousys, Onyx and Vecow.Other NVIDIA partners launching new Jetson Orin-based solutions worldwide include Auvidea, Basler AG, Connect Tech, D3 Engineering, Diamond Systems, e-Con Systems, Forecr, Framos, Infineon, Leetop, Leopard Imaging, MiiVii, Quectel, RidgeRun, Sequitur, Silex, SmartCow, Stereolabs, Syslogic, Realtimes, Telit and TZTEK, to name a few.

-

Isaac SIM 2022.1 Release

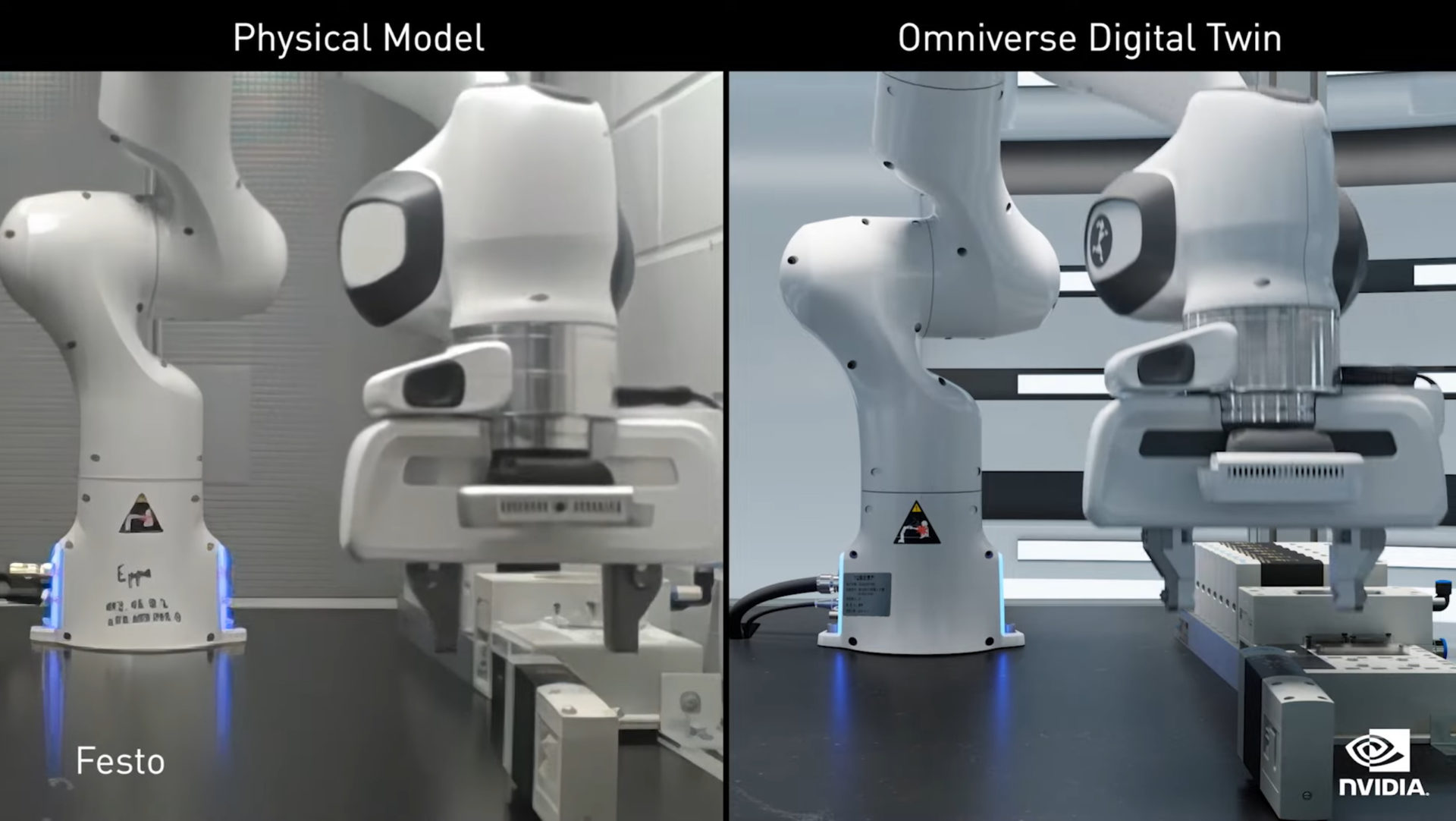

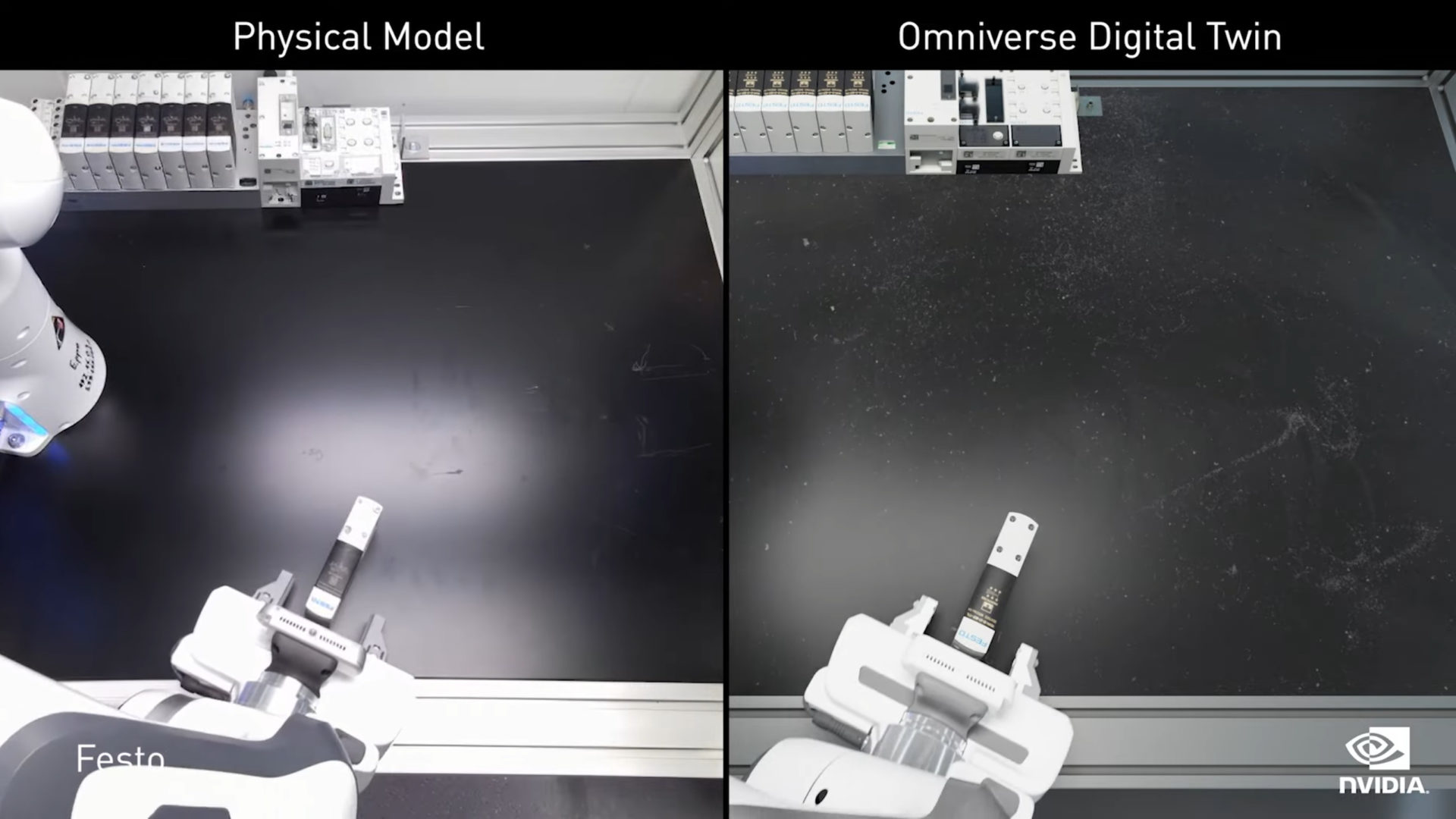

NVIDIA Isaac SIM 2022.1 release introduces new features that make it the simulator of choice in the age of AI robotics. For example, a new tool called Cortex will make it a lot easier for developers to create and program collaborative robots (co-bots). Moreover, a tool called Isaac Gym allows reinforcement learning to be leveraged to train robot control policies in minutes, as opposed to days.

On top of existing tools on the previous version of Isaac SIM, such as the Isaac Replicator (a Synthetic Data Generation tool), Cortex and Isaac Gym will make the whole simulator system a lot more comprehensive and easier for developers and engineers to create their robots.

-

NVIDIA DRIVE Hyperion Supplier partners and production roadmap

Being an open platform, the NVIDIA DRIVE Hyperion platform is used by manufacturers all over the world to build their next generation autonomous vehicle systems. As planned, the Hyperion version 8 will be shipped in all new Mercedes-Benz vehicles starting in 2024, followed by Jaguar and Land Rover cars and SUVs in 2025. Recently at GTC 2022, NVIDIA also announced that the new Hyperion version 9 will also be shipped in vehicles starting in 2026.

To ensure that the DRIVE Hyperion platform can reach the car manufacturers, NVIDIA also announced their partnership with Foxconn, Quanta Computer and Desay as their newest DRIVE Hyperion supplier partners. This helps the OEMs scale the technology into production applications.