NVIDIA Blackwell has set new records in the latest MLPerf Inference V5.0 benchmarks, demonstrating a significant leap in AI inference performance.

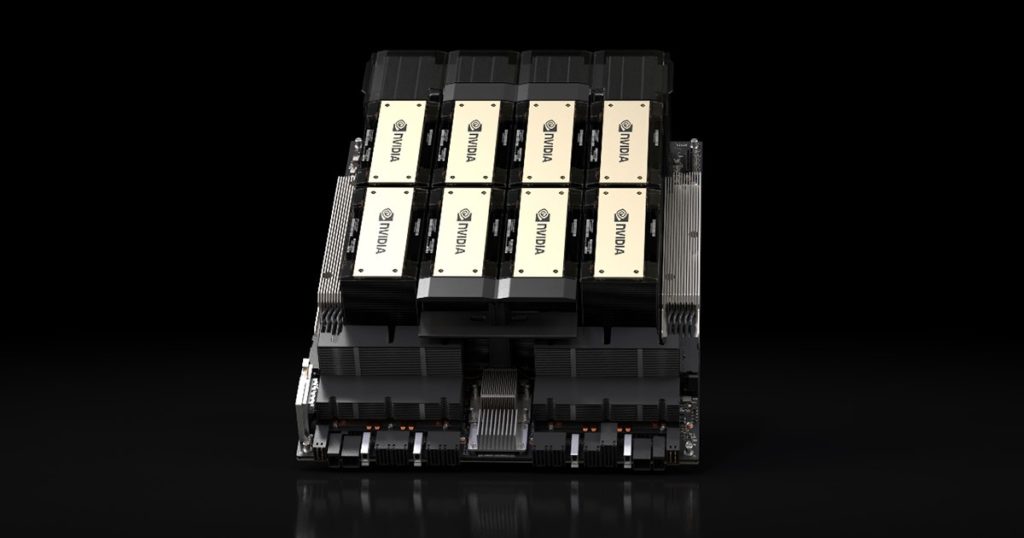

Tested using the GB200 NLV72 system, NVIDIA’s flagship rack-scale AI reasoning solution, Blackwell achieved 30 times higher throughput on the Llama 3.1 405B benchmark compared to the NVIDIA H200 NVL8. This remarkable gain is attributed to the system’s 72 interconnected Blackwell GPUs, functioning as a single high-performance unit, alongside a ninefold increase in the NVIDIA NVLink interconnect domain.

Tested using the GB200 NLV72 system, NVIDIA’s flagship rack-scale AI reasoning solution, Blackwell achieved 30 times higher throughput on the Llama 3.1 405B benchmark compared to the NVIDIA H200 NVL8. This remarkable gain is attributed to the system’s 72 interconnected Blackwell GPUs, functioning as a single high-performance unit, alongside a ninefold increase in the NVIDIA NVLink interconnect domain.

The latest iteration of MLPerf Inference introduces new challenges, including the Llama 3.1 405B model—one of the largest open-weight models available—as well as a revised Llama 2 70B Interactive benchmark with stricter latency constraints designed to reflect real-world deployment conditions.

A key highlight of the benchmarks is responsiveness, evaluated using two critical metrics: Time to First Token (TTFT), which measures how quickly responses begin, and Time Per Output Token (TPOT), which gauges token delivery speed. The updated Llama 2 70B Interactive benchmark enforces a fivefold reduction in TPOT and a 4.4x decrease in TTFT, ensuring a more responsive AI experience. NVIDIA’s submission, powered by a DGX B200 system with eight Blackwell GPUs, delivered triple the performance of an eight-GPU NVIDIA H200 system, setting a new benchmark for AI responsiveness.

By combining the Blackwell architecture with an optimized software stack, NVIDIA continues to push the boundaries of inference performance, enabling AI factories to achieve greater intelligence, higher throughput, and faster token generation.

While the Hopper architecture may no longer represent NVIDIA’s latest innovation, it remains foundational to AI advancements. Through ongoing software enhancements, NVIDIA has boosted throughput for Hopper-based AI systems, achieving up to 1.5x performance gains on the H100 GPU and 1.6x on the H200 GPU in the Llama 2 70B benchmark. Furthermore, Hopper remains fully compatible with the latest Llama 3.1 405B, Llama 2 70B Interactive, and graph neural network tests, ensuring continued relevance.

While the Hopper architecture may no longer represent NVIDIA’s latest innovation, it remains foundational to AI advancements. Through ongoing software enhancements, NVIDIA has boosted throughput for Hopper-based AI systems, achieving up to 1.5x performance gains on the H100 GPU and 1.6x on the H200 GPU in the Llama 2 70B benchmark. Furthermore, Hopper remains fully compatible with the latest Llama 3.1 405B, Llama 2 70B Interactive, and graph neural network tests, ensuring continued relevance.

MLCommons’ continuous refinement of the MLPerf Inference benchmark suite ensures that AI infrastructure evolves in step with technological progress. By providing rigorous, peer-reviewed performance data, these benchmarks serve as an invaluable resource for IT decision-makers seeking the most effective AI solutions.