The era of using artificial intelligence to generate a disjointed string of text or a solitary, generic image is rapidly coming to a close. Today, the focus has shifted toward building complete, functional prototypes almost instantly.

With the dual release of Qwen3.5-Omni and Wan2.7-Image, Alibaba has introduced an all-encompassing creative toolkit designed to dramatically accelerate how developers and creators bring their ideas to life

Coding by demonstration with Qwen3.5-Omni

At the heart of this prototyping revolution is Qwen3.5-Omni, Alibaba’s most advanced omni-modal large language model to date. While its ability to process text, audio, images, and video is impressive, its most transformative feature for developers is its “Audio-Visual Vibe Coding” capability.

By leveraging native multimodal scaling, Qwen3.5-Omni allows users to essentially code by demonstration. Instead of writing boilerplate code from scratch, a developer or designer can simply showcase a handwritten product sketch via their camera and verbally describe its functionality.

In response, the model autonomously generates a functional user interface (UI) for websites, apps, and mini-games. This intuitive “show-and-tell” process completely transforms the early stages of software creation, dramatically lowering the barrier to prototyping and development.

Populating the prototype with Wan2.7 -Image

Of course, a functional UI code is only half the battle; it needs stunning, brand-consistent visual assets to truly become a prototype. This is where Wan2.7-Image steps in to handle the visual design process.

Historically, AI-generated images have suffered from a generic, standardized appearance and highly unpredictable colour rendering, leading to a frustrating process of trial-and-error for professional designers.

Wan2.7-Image transcends these shortcomings by giving creators unprecedented control over their final outputs. Through a new “colour palette” feature, users can input specific colour codes and proportions directly into their prompts to replicate complex artistic styles or lock-in exact corporate brand colours.

This ensures that every image perfectly matches a company’s strict visual guidelines.

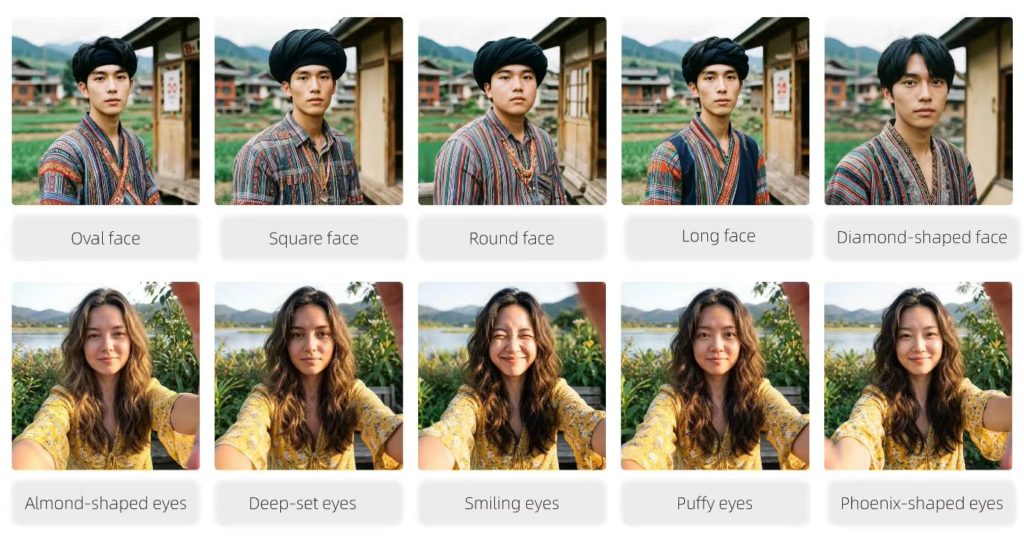

Furthermore, the Alibaba model allows creators to fine-tune specific physical attributes, such as eye shape and bone structure, to generate truly unique and lifelike characters tailored to a specific project.

Images generated by Wan2.7-Image

Because Wan2.7-Image can process multiple images and generate up to 12 visual assets at once, creators can effortlessly build cohesive e-commerce campaigns, architectural renderings, or storyboards to populate their newly coded applications.

Revolutionizing the creative workflow

By pairing the rapid, multi-modal coding abilities of Qwen3.5-Omni with the precise, professional-grade visual generation of Wan2.7-Image, Alibaba is offering a true “prompt-to-prototype” ecosystem. Creators no longer have to stitch together mismatched AI outputs; instead, they have a streamlined path from a simple handwritten sketch directly to a polished, fully functional digital product.